Timnit Gebru was fired over the power of words and the fear they can bring. These opinions are solely mine, informed by my observations, and does not represent any investment advice whatsoever.

If I told you that one of the world’s most powerful, valuable, and influential companies fired a prominent Black female employee for calling out an environmental risk and then attempted to gaslight the whole thing, you might think I was crazy! Yet somehow that’s the precise journey Google has been on since early December 2020.

After working on a paper on how language models were inefficiently trained, leading to an environmental risk that can’t be ignored, Timnit Gebru, a co-lead in AI Ethics, was fired from Google. Google attempted to say that she resigned, which she objected to and corrected.

I choose to believe Gebru. Since her firing, other voices from Google have shown their support for her and started calling out the company’s own bias, including the newly fired Margaret Mitchell, the other AI Ethics co-lead, who simply tweeted:

This entire affair has broken trust with AI researchers, as written about here by Ali Alkhatib. While Alkhatib comes at this problem from a researcher’s perspective and Gebru and others have tackled it from the D&I/cultural perspective, I’m going to tackle it from another angle that should make us all question the company’s leadership and direction.

What is ESG?

First, a primer. Capital Markets firms are aligning purpose and profit. Firms are examining the Environmental, Social, and Corporate Governance (ESG) ways by which companies operate and allocate capital accordingly. These are material risks that can directly impact a company’s bottom line.

Non-material risk: For a retailer, removing plastic straws from their break room might help the environment, but doesn’t impact the bottom line.

Material risk: For a toy manufacturer, shifting plastics to sustainably sourced helps the environment and impacts the bottom line.

Data providers conduct analysis on public companies and their alignment to material ESG factors and surface that information to the markets. Let’s look at two of Google’s existing ratings. Note that these ratings represent proprietary research which is not surfaced publicly beyond the broad strokes.

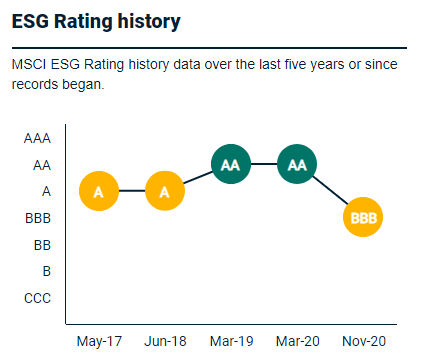

As of November 2020, right before Gebru’s firing, Google was downgraded to a BBB on their MSCI ESG rating. MSCI, a data and ratings agency, cites Alphabet (Google’s parent) as a laggard across 3 areas: Corporate Governance, Corporate Behavior, and Opportunities in CleanTech. However, MSCI considers Google a leader in Privacy & Data Security, Human Capital Development, and Carbon Emissions.

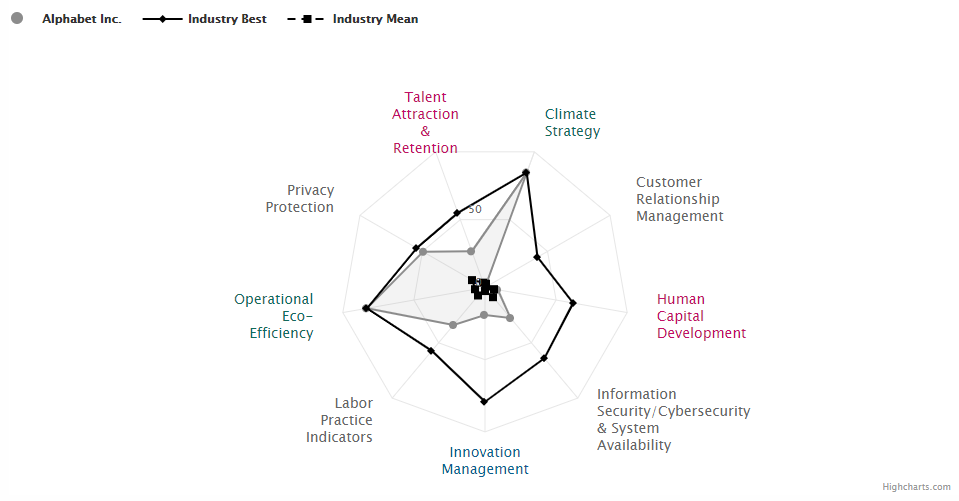

We can also check where S&P Global rated Alphabet. The relevant areas to examine would be the low ratings for Talent Attraction & Retention and Human Capital Development. What’s interesting, but not surprising is how high they are on Climate Strategy and Operational Eco-Efficiency.

Google’s ongoing public commitment to the environment, culminating in this September 2020 announcement (although there is a conversation around carbon offsets to be had) and their overall sustainability strategy is a clear, well-defined strategy, based on philanthropy and materiality.

This type of data analysis, along with a Capital Markets firms’ own internal analysis could be surfaced to retail investors who are aligning to their values, institutional investors creating funds, ESG-linked bonds or loans, and other financial vehicles.

We must understand ESG if we’re going to look at it through this context.

Google’s Nightmare ESG Cocktail

Google has a reputation as a leader in AI with many companies seeking out their expertise and services. Google Cloud Platform and its AI capabilities represent financial growth levers for Google. We know from the mountain of work on AI Ethics, as periodically illustrated by the Montreal AI Ethics Institute (MAIEI), just how important this conversation is (for a great writeup on the Gebru firing from an AI Ethics/gaslighting lens, check the January 2021 “The State of AI Ethics” report). At close to 200 pages, this regularly published report is just one point-in-time glimpse at the continual work being carried out in this field.

And so, if AI Ethics matters to the future of AI, it must be material to Google as well. Consider the fines and regulatory scrutiny they could face if their algorithms were suddenly uncovered to have promoted bias or incorrectly made mature suggestions to children. There would be serious financial implications. Having a diverse, well-respected, proven co-lead of the AI Ethics team had a Social impact since vocal and diverse voices help to avoid ESG risks with their unique perspectives. Without a diverse and trustworthy eye on AI Ethics, Google is putting the company and shareholders at risk.

In fact, MAIEI put these ideas together themselves in a snarky tweet:

As shown here, Google understood the materiality of AI Ethics back in August 2020! Gebru’s active engagement with AI Ethics was the proof that diversity is valuable, especially in these ‘tricky’ cases. There are many cases that show just how valuable diversity is to a company, tricky subject or not.

As of February 2021, Google has appointed Marion Croak, a Black, female lead for AI Ethics, but the damage has already been done and the statement made is irrelevant unless trust can be restored. For Google, having a new Black, female AI Ethics leader is now less material than carrying out important, trusted AI Ethics work with diverse staff who can be confident in their papers. Google has now switched the focus of diverse voices to another material risk when they fired Gebru – that of trust.

Only time will tell if this move restores trust and even if it does, outside peer reviewers will approach Google’s research with skepticism, if they engage at all.

As a cloud provider, Google also has material Environmental risks, which is why its sustainability strategy is so important. A cloud company can’t run one of the world’s largest datacenter footprints and not align sustainability to its operations.

In June 2019, New Scientist published an article warning “Creating an AI can be five times worse for the planet than a car.” Chris Priest from the University of Bristol pointed out, “From an energy perspective, and from a carbon reduction perspective, we should be thinking about designing the services and making sure the algorithms are efficient as possible.”

Google, and all cloud providers, face similar risks of local and global biodiversity/climate impacts at the scale at which they operate their datacenters.

What’s interesting to me about Gebru’s paper is that she gave Google a gift. In showing the environmental impact of language models and how they impact marginalized groups, she identified obstacles that could have created a discussion on how to mitigate future business risk. Google’s leadership failed to recognize this and instead embarked on a path that sowed uncertainty across their employee base and the larger field of AI Ethics. This falls squarely in the Corporate Governance pillar of ESG. They now have a bigger challenge, building back their customers’, researchers’, and investors’ trust.

The Risk Lies in the Markets and Beyond

Every company has problems and every leader is fallible, yet these missteps by Google, and the now talked about culture that underpins it, could lead to serious financial consequences and a crisis of market confidence as the conversation continues to evolve.

Consider the Morningstar NAACP Minority Empowerment fund. Today, Google is one of the top 10 holdings, but with these issues coming to light, will they remain? Sure, there is a balance of diversity initiatives Google has invested in, but if their core is broken, how do you weigh them? Philanthropy, while admirable, is not an indication of future performance, but your culture is.

Outside of the markets, customers may start wondering if Google is the right partner for their AI workloads and eventually, consumers could start questioning Google’s motivations through their purchasing power.

Google and other big tech firms have been in the news as ESG has continued to grow. The Netflix documentary “The Social Dilemma” has questioned what Google (YouTube) and others are doing with our data and how we’re being manipulated. Google, Twitter, and Facebook have also been questioned by Congress about data usage and these issues are coming to a head.

When you add these sparks to Gebru’s firing and its future implications, you can start to see a conflagration on the horizon.